Impatience is a virtue

Larry Wall (the inventor of Perl) is credited with identifying the three great virtues of a programmer: "laziness, impatience, and hubris."

These virtues are usually shared with a wink. For example, a programmer's "laziness" will drive them to get as much work done as possible with the least amount of effort. They say that we'll write automation scripts (in Perl probably) or follow the DRY principle to remove all redundant and unnecessary effort.

I find the "impatience" and "hubris" virtues more interesting.

The virtue of "impatience" is meant to make us "write programs that don't just react to your needs, but actually anticipate them." It also means that we demand a lot from our software. We want it to be as fast, efficient, and proactive as possible. It can be just as satisfying to double the speed of a program as it was to create "v1.0" in the first place.

Impatience is a virtue that I think we share with our Sugar users. We all want Sugar to be responsive and, at best, to be able to provide an insight that anticipates every Sugar user's need. That's why we have a dedicated team of Performance Engineers that work cross-functionally with Product, Operations, and Support teams with the goal to make Sugar fast. REAL FAST.

The last virtue is "hubris" which means that programmers should take great pride in the code they write and the work they do. So let me exercise a little hubris by bragging about what the Performance team has accomplished over the last year.

Performance Testing Apparatus

We've leveraged our experience of running the Sugar cloud to put together an exhaustive REST API performance test suite of over 15,000 API requests using JMeter. These requests exercise commonly used Sugar functions such as loading List Views, viewing and updating Record Views, performing mass updates, and much more. We combine that by using Tidbit to load the Sugar database with 60GB of data. This data set includes a half million Accounts, two million Contacts, and millions more interaction records.

The combination of JMeter test suite and Tidbit data set serves as the basis for testing and comparing different Sugar versions and build to build. The results below were gathered by running this same test suite against multiple Sugar versions - 8.0.1, 8.0.2, 8.3.0, and a pre-release build of Sugar 9.0.0.

Remember: If you are a Sugar Customer or Partner and you want to have closer look at our JMeter performance test tools, please email developers@sugarcrm.com. Tidbit is an open source project available on our Github organization.

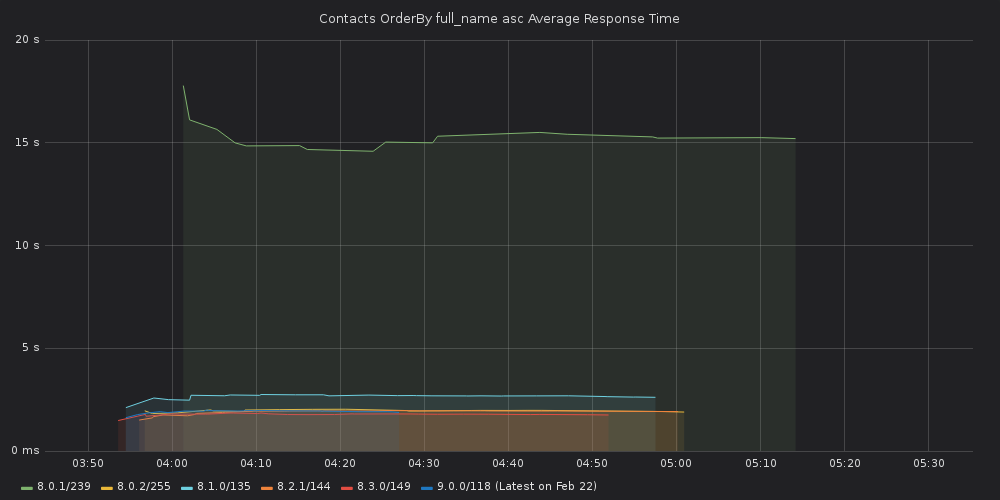

3x to 7x faster Filter API performance since July 2018

The Filter API is one of the most commonly used Sugar REST APIs. It is used to load List Views, Dashlets, Subpanels, and is commonly used by integrations that need to synchronize data between Sugar and an external system.

This API can introduce some performance challenges because it allows access to many records at once using arbitrary filter criteria and sort order. The performance of this API can vary widely depending on the data set, the database schema, and the options being used. So this API has been a particular area of focus for our performance engineers that having been identifying bottlenecks and eliminating them one by one.

As a result, Filter API performance in Sugar 9 has improved by a factor of 3x to 7x as compared to Sugar 8.0.1 which was released less than a year ago in July 2018.

Average API response time cut by more than half since July 2018

The average Sugar 9.0 response time across the 15,000 requests in our test suite is a fraction of what it was in 8.0.1. It is 40% faster than 8.0.2 and 30% faster than even our Winter '19 release. This means that no matter how you are using or integrating with Sugar, you will see significant responsiveness gains by upgrading to Sugar 9.0 (on-premise) or Spring '19 (cloud) when they come out.

| Release | Average Response Time |

|---|---|

| 8.0.1 | 1.59s |

| 8.0.2 | 1.03s |

| 8.3.0 | 903ms |

| 9.0.0 (pre-release) | 596ms |

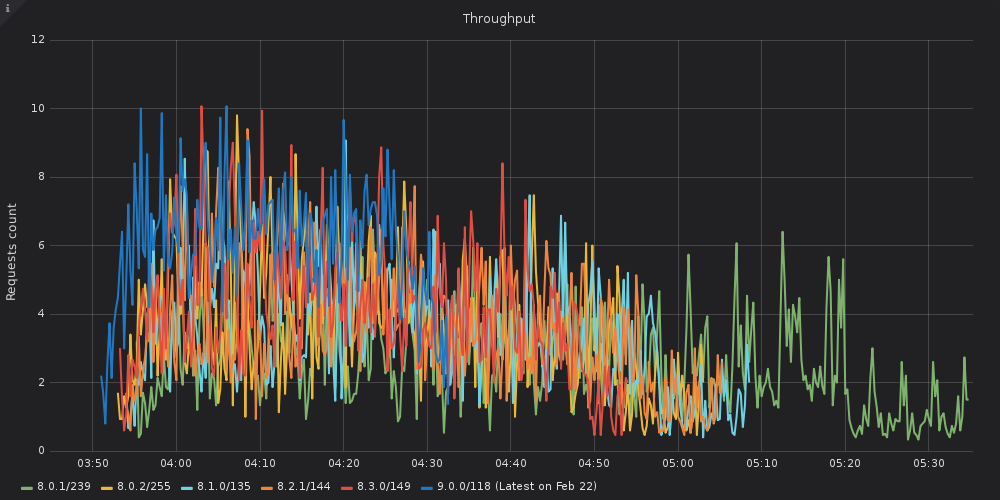

Increased throughput by 70% on same hardware

If you are using Sugar on-premise, there is a substantial increase in request throughput which can translate to lower hosting costs since more users can be hosted on less hardware. Our testing shows that Sugar 9.0 should process 70% more requests in the same amount of time as the latest Sugar 8.0.2 on-premise release using the same hardware. This should help you stretch hosting dollars further.

Our work is never done

This is a really exciting time to be part of the SugarCRM ecosystem. We are adding many new products and capabilities to the SugarCRM portfolio and our Performance team is here to make sure that all these products meet and exceed our customer's performance expectations.